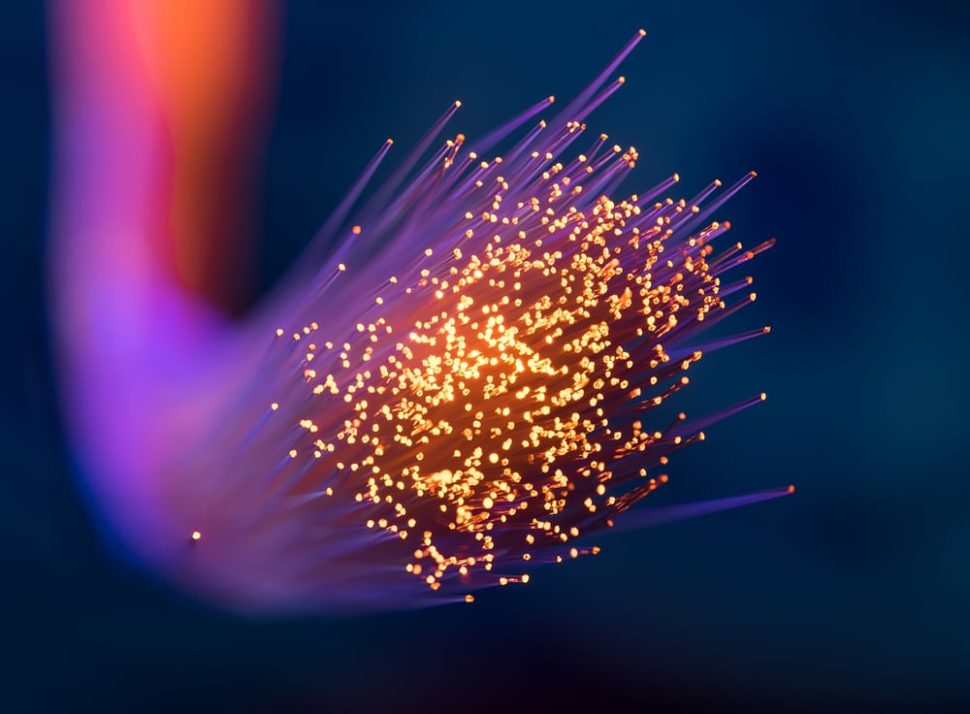

Scientists have reportedly created a new algorithm that will enable the quick and easy access to Big Data.

In a joint study published in the journal IEEE Transactions on Network and Service Management, researchers from the Samara University and the University of Missouri described a new algorithm that could provide faster and more reliable access to Big Data processing centers.

According to the Russian and American computer scientists who worked on the project, their algorithm utilizes a unique routing method to work. This technique allows for fast access to the world’s largest and most powerful data centers, providing a more efficient way of solving high-precision calculations.

“We offer the mechanism that can be in demand by the scientists who conduct experiments on the basis of the Large Hadron Collider at Cern,” Andrey Sukhov, one of the authors of the study and a professor at the Department of Supercomputers and General Informatics of Samara University, said.

“They calculate tasks in the laboratories scattered all over the world, make inquiries to the computer centers of the Cern. They also need to exchange both textual information and high-resolution streaming video online. The technology that we offer will help them with this.”

In their study, the researchers explained that the algorithm would only succeed at its performance if the four requirements have been met. The four includes the bandwidth signal, data transmission speed in Kbps, cloud service price, and the cloud storage.

The algorithm allegedly finds the superior constrained shortest path for the desired quality and data transmission speed to be achieved. At its peak, the speed of data transmission can be increased by up to 50 percent.

The new algorithm was called “The Neighborhoods Method” and allows frameworks to share their load and pathways with other networks. The algorithm’s functionality also reportedly stays the same regardless of what kind of Internet connection is being used.

Sukhov and his colleagues said that they are planning to use the algorithm on their own projects. He claimed that other computer scientists who are interested in using the algorithm to calculate data about combustion reactions have also approached them.

Comments (0)

Most Recent